My other half likes to keep tabs on a few shares and she’s been using a variety of tools including ShareScope (www.sharescope.co.uk) and various stock tickers on her Windows desktop and Android mobile. One problem is that each source provides a different set of columns but the biggest issue is that it takes time to update the various lists in each place whenever she wants to change the stocks she’s keeping tabs on. I looked around quite a bit and it seems clear that, with the advent of Windows 8, Microsoft isn’t keen on desktop widgets anymore. There seem to be tickers on Android that allow sharing of the stock list but it has to be done manually and it would really be nice if the updates were automatic. Also I’ve been getting requests from her for ex-dividend data which doesn’t seem to be up to date on Yahoo Finance for UK stocks and that’s the only place most tickers seem to get their data from.

So I started thinking more about it. And the truth is I wanted an excuse to try some UI programming with Python which I haven’t done before – apart from brief tinkerings with TkInter ages ago. I’d read about the Python bindings for QT and figured this would be a good time to have a go.

Source code is here https://github.com/robdobsn/QtStockTicker/

qt application and python

Qt and python seem to make pretty good companions. I particularly like the way event handling (signals and slots) works in Qt and even better is the python way of connecting to a signal. For instance if the signal is called “clicked” (occurs when a push button is clicked) then the wiring in python is simply:

buttonName.clicked.connect(self.methodName)

I can’t say that I liked the QtDesigner too much though and I ended up writing the UI in code. I think partly I was confused by QtQuick which I now gather is designed expressly for mobile devices. Anyhow, it confused me sufficiently that I steered well clear of QtDesigner too.

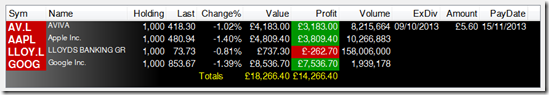

Fortunately the result I wanted is simple to achieve with a QtTableWidget.

getting ex-dividend dates

I’m still not completely happy with the solution I found to this as it uses Selenium WebDriver with Firefox and I haven’t managed to get this working headlessly so Firefox pops up each time the data is scraped. Fortunately this doesn’t need to be too often as the data is only updated infrequently so I’ve timed it to occur overnight. I also tried an approach without using Selenium which relied on calling client-side javascript but I didn’t get this work reliably either.

A shortened version of the source is here:

from selenium import webdriver

from bs4 import BeautifulSoup

class ExDivDates():

def run(self):

self.running = True

self.t = threading.Thread(target=self.do_thread_scrape)

self.t.start()

def stop(self):

self.running = False

def do_thread_scrape(self):

while(self.running):

exDivInfoList = []

urlbase = ".... site to scrape ...."

pageURL = urlbase

browser = webdriver.Firefox() # Get local session of firefox

browser.get(pageURL) # Load page

while (True):

colNames = ['symbol','name','index','exDivDate','exDivAmount','paymentDate']

# Get page and parse

html = browser.page_source

soup = BeautifulSoup(html, "lxml")

rows = soup.findAll('tr', {'class' : 'exdividenditem' }) + soup.findAll('tr', {'class':'exdividendalternatingitem'})

# Extract stocks table info

for row in rows:

colIdx = 0

stockExDivInfo = {}

for cell in row.findAll(text=True):

cell = cell.strip()

if cell == "":

continue

if colIdx < len(colNames):

if colNames[colIdx] == "symbol":

cell = cell + ".L" if (cell[-1] != ".") else cell + "L"

stockExDivInfo[colNames[colIdx]] = cell

colIdx += 1

exDivInfoList.append(stockExDivInfo)

# code to find the max pager number stripped out here ....

# Find the pager and go to next page if applicable

curPagerNumber += 1

if curPagerNumber > maxPagerNumber:

break

browser.find_element_by_xpath("//a[contains(.,'" + str(curPagerNumber) + "')]").click();

time.sleep(3)

# Close the browser now we're done

browser.close()

# Put found stocks into the dictionary of current data

for stk in exDivInfoList:

self.lock.acquire()

self.stocksExDivInfo[stk['symbol']] = stk

self.lock.release()

time.sleep(60)

getting stock values

I’d done a bit of googling and found the ystockquote library for Python. Pretty much everyone talking about getting stock data into a python ticker seems to use ystockquote but it didn’t give me the data I was looking for so I pulled the very small amount of code necessary into my own source file so I could tinker with it without changing a library.

The following is a simplified version of the code which runs in its own thread getting stock values.

import threading

from urllib.request import Request, urlopen

class StockValues:

# various initialisation code missed out here to save space

def setStocks(self, stockList):

self.listUpdateLock.acquire()

self.pendingTickerlist = stockList

self.listUpdateLock.release()

def run(self):

self.running = True

self.t = threading.Thread(target=self.do_thread_loop)

self.t.start()

def do_thread_loop(self):

while ( self.running ):

for stock in self.tickerlist:

ticker = stock

try:

stkdata = self.get_quote( ticker )

except:

print ("get_quote failed for " + ticker)

stkdata['time'] = now

self.lock.acquire()

self.stockData[ticker] = stkdata

self.lock.release()

time.sleep(1)

# Check if the stock list has been updated

self.listUpdateLock.acquire()

if self.pendingTickerlist != None:

self.tickerlist = self.pendingTickerlist

self.pendingTickerlist = None

self.listUpdateLock.release()

def get_quote(self, symbol):

"""

Get all available quote data for the given ticker symbol.

Returns a dictionary.

"""

values = self._request(symbol, 'l1c1va2xj1b4j4dyekjm3m4rr5p5p6s7abghp2opqr1n').split(',')

return dict(

price=values[0],

change=values[1],

# ... other values removed here for brevity

name=self.stripQuotes(values[29]),

)

# Borrowed from ystockquote

def _request(self, symbol, stat):

url = 'http://finance.yahoo.com/d/quotes.csv?s=%s&f=%s' % (symbol, stat)

req = Request(url)

resp = urlopen(req)

return str(resp.read().decode('utf-8').strip())

storing the stock list so it can be accessed locally and on the hoof

I probably spent the best part of two days getting what seemed to be a relatively simple aspect of the application working properly. The idea was that when the list of stocks to be watched changed the changes would be written to several places with version numbers to help know which was most up-to-date. What I wanted to achieve was a simple system that would allow the user to make a change at home and have a mobile update with the new stock data seamlessly. I still haven’t implemented any form of notification to avoid polling but even so it took me a long time to get the necessary data transfers working.

I’d decided that a simple json-encoded file would be the easiest way to store data and that I’d use an web/ftp hosting service as one repository for the data file. Other repositories could be on the local computer and on a server in the house. The logic is that if there is no access to the internet then the server (or local) copy is used and the “cloud” copy is updated when it is next available. When I get around to developing a mobile version it will work similarly and should operate successfully whether it is used on the home WiFi or on mobile data.

My problems really all stem from my indecision about whether I should use a binary or text file and the various binds I got into each time I changed my mind. Some of the issues that cropped up are:

- The Python 3 ftplib doesn’t allow the use of a text file even for ascii mode STOR operations

- The Python json library doesn’t allow data read directly from a binary file to be used with the load() method (which expects a string not bytes)

- The Python requests library’s text contains string data which can’t be directly written to a binary file

In order to understand this I set out trying to understand Python 3’s unicode support through this document http://docs.python.org/3/howto/unicode.html and got thoroughly bogged down in the first few sections on unicode history, encodings and references. I don’t think I even made it to the section entitled Python’s Unicode Support which is actually quite simple to follow. If I had I would have realised that all that might have been needed was to call decode(‘utf-8’) here and there.

Anyhow, I didn’t realise that and got around the problems by using text files and re-writing them into binary files when absolutely necessary – as is the case for the ftplib calls.

populating a list of symbols that can be watched

One of the more difficult things I found was accessing the stock ticker symbols that yahoo uses. Yahoo doesn’t seem to have an easily used list and eventually I found a list on the www.lse.co.uk site which provides the information I was looking for. This doesn’t include US symbols or symbols for the AIM market in the UK but those could be added I guess. In the end I decided to scrape the www.lse.co.uk site to retrieve the list of symbols as it isn’t done very often and this ensures the list doesn’t get out of date – I do hope the LSE doesn’t change its site though.

import requests

from bs4 import BeautifulSoup

import re

class StockSymbolList():

def getStocksFromWeb(self):

self.stockList = []

r = requests.get('http://www.lse.co.uk/index-constituents.asp?index=idx:asx')

soup = BeautifulSoup(r.text)

for x in soup.find_all('a', attrs={'class':"linkTabs"}):

#print (x.text)

mtch = re.match("(.+?)\((.+?)\)", x.text)

if (mtch != None and mtch.lastindex == 2):

# Append .L to make it work with Yahoo

coName = mtch.group(1)

symb = mtch.group(2) + ".L" if (mtch.group(2)[-1]!='.') else mtch.group(2) + "L"

self.stockList.append([coName,symb])

else:

print("Failed Match", x.text)

stocktickerconfig.json … configuration file

To get the program to work a configuration file (local to the source code) is required that defines the locations and access methods for where the stock list is to be stored.

{

"FileVersion": 0,

"ConfigLocations":

[

{

"hostURLForGet": "http://mysite.com", "filePathForGet": "/mypath/stocklist.json", "getUsing": "http",

"hostURLForPut": "mysite.com", "filePathForPut": "stocklist.json", "putUsing": "ftp",

"userName": "username", "passWord":"password"

},

{

"hostURLForGet": "//server/share", "filePathForGet": "/path/stocklist.json", "getUsing": "local",

"hostURLForPut": "//server/share", "filePathForPut": "/path/stocklist.json", "putUsing": "local",

"userName": "", "passWord" : ""

},

{

"hostURLForGet": "", "filePathForGet": "stocklist.json", "getUsing": "local",

"hostURLForPut": "", "filePathForPut": "stocklist.json", "putUsing": "local",

"userName": "", "passWord" : ""

}

]

}

note: installing Qt5 and PyQt

This isn’t a seamless process on Windows but it involves the following steps:

- Download and install Qt5.1

- http://qt-project.org/downloads – I chose 32bit VS2010 OpenGL but there is an online installer (though it doesn’t install docs I don’t think)

- SIP Package to make Python bindings – I don’t think this is actually needed with the binary download of PyQt5 but I installed nonetheless

- SIP – package to make C++ bindings for python modules

- http://www.riverbankcomputing.co.uk/software/sip/download

- Extract to downloads C:\Users\rob\Downloads\sip-4.14.7-extracted

- open cmd prompt and navigate to place where extracted

- c:\python33\python.exe configure.py

- Install pyQt5

- http://www.riverbankcomputing.co.uk/software/pyqt/download5 – I went for 32bit Windows exe

- Other dependencies

- easy_install beautifulsoup4

- easy_install selenium

- easy_install requests

- lxml parser (used in conjunction with beautifulsoup4)

- http://www.lfd.uci.edu/~gohlke/pythonlibs/ – lxml-3.2.3.win32-py3.3.exe